Editor’s note: This article is the first part of a three-part series on child sexual abuse material (CSAM) and child sex trafficking (CST).

***Disclaimer/trigger warning: This article discusses sexual offenses committed against children. Reader discretion is advised.

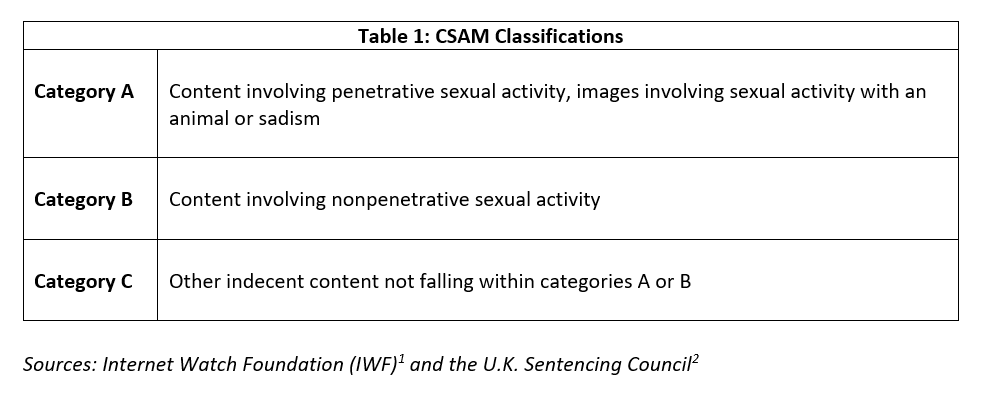

"Child pornography” is a term that many of us would be familiar with, and in many official spaces, we can find that it remains in use. The term pornography implies consent, and it can also imply a level of mainstream content. There is no consent present as children cannot legally provide it, and there is absolutely nothing mainstream (or vanilla) about CSAM. Therefore, many investigation and law enforcement (LE) professionals, advocates and nongovernmental organizations (NGOs) have migrated to the term CSAM. The CSAM classification table included in this article was developed primarily as a sentencing guideline for criminal prosecutions, but it is also used by academia and LE. Familiarity with the classifications is valuable for informational purposes and will help provide anti-financial crime (AFC) investigators with context and further insight into the nature of CSAM crimes and content. As we know, the better investigators understand the criminal element, the better the investigation.

The numerous studies and statistics available seem to come to an agreement on one point: The volume of reported CSAM and CST crimes is proliferating at significant levels, and further, the severity and level of violence in these crimes are increasing. Beyond the headlines, sound bites, studies and statistics are real cases of sexual exploitation involving real children. In this series, we will look well beyond the headlines and have a closer look at the crimes and, importantly, what we can do as financial investigation professionals and parents.

Investigating Child Sexual Exploitation

Visceral is one of the first words that comes to mind when explaining what it feels like to conduct investigations on child sexual exploitation (CSE) and CSAM. The crimes are violent, degrading in nature and are memorialized through the production of CSAM. Further, they are commercialized through the sale of access to the CSAM generated and/or access to children for illicit sexual encounters with adults. There is no such thing as an “easy” investigation, as each and every one of them involves unspeakable acts of evil being committed against children.

The offenders themselves are different from other criminal actors. In the CSAM space, the offenders are usually sexually motivated with little to no semblance of empathy or remorse. Given sex is their primary motive, they are more difficult for most to figure out than those with financial gain as the primary driver. That said, as is the case with many crimes, the two are not mutually exclusive and there clearly are intersections of their perversions and the commercialization of their crimes.

There are a few different categorization scales in use for CSAM. The table below is sourced from the Internet Watch Foundation (IWF), which is an internationally recognized and respected NGO specializing in the prevention of CSE. As shown in Table 1, the scale itself was sourced by the IWF from the U.K. government’s sentencing guidelines in which CSAM content is classified.

Breaking It Down

Category A: Reserved for the most egregious offenses. The official description is necessarily vague as it needs to encompass a broad range of criminal sexual acts against children. It is also vague because the nature of the crimes is so abhorrent. Sparing the specifics, CSAM forums contain commentary pertaining to the commission of contact offenses, including the abduction of a child or attempt to procure one for contact sexual offenses.

Category B: Includes any sexual acts without penetration, including oral sex, “petting” of genitals or nonpenetrative acts of masturbation. Although it is categorized as less severe, it is not difficult to imagine that nonpenetrative sex acts can be rather violent and degrading, even without penetration.

Category C: Captures offenses not falling into A or B. This may include non-nude images of children in sexualized poses or even “clean” images of children repurposed under a sexual context (e.g., a child in a bathing suit). This has been referred to as “child erotica,” and sadly, it is not illegal in all jurisdictions.

Conclusion

It can be easy to lose sight of the fact that beneath the rows of data and financial transactions are children who are being horrifically abused and/or trafficked. You, as financial investigators, are in a fantastic position to identify and report financial indicators of CSAM and CST that may have otherwise gone undetected. It is a lot of work, and it can seem overwhelming, but it does have a tremendous impact. The information shared in this series is not the easiest material to digest. It has been composed in a thoughtful manner and with discretion to avoid sharing any details that are not necessary from an AFC perspective. This series is specially designed to inform and help you better understand CSAM and CST crimes. As a result, you will be better equipped to identify and investigate cases and generate strong reports for LE.

In future segments of this series, we will have a deeper look at new and emerging trends in CSAM and CST. These will include “self-generated” CSAM and live-streaming platforms, sextortion and blackmail, child sex trafficking and the grooming tactics used to entice or coerce children. Importantly, in each, we will explore what we can do in our jobs (and in our homes) to help prevent exploitation and protect the innocent and vulnerable.

Matt Richardson, director of Intelligence and Investigations, Anti-Human Trafficking Intelligence Initiative (ATII), ![]()

- Alexis Jay, “Category A child sexual abuse material of a ‘self-generated’ nature—an IWF snapshot study,” The Internet Watch Foundation, https://www.iwf.org.uk/about-us/why-we-exist/our-research/category-a-child-sexual-abuse-material-of-a-self-generated-nature-an-iwf-snapshot-study/

- “Sexual Offenses: Response to Consultation,” The U.K. Sentencing Council, December 2013, https://www.sentencingcouncil.org.uk/wp-content/uploads/Final_Sexual_Offences_Response_to_Consultation_web1.pdf